From Drowning in Logs to Conversing with Your Data: Introducing Maxmallow

Picture this: It's 3 AM, your AI agent is behaving erratically in production, and users are complaining. You open your observability dashboard and you're greeted with thousands of traces, sessions, and log entries. You know the answer is somewhere in there: but where do you even start?

This is the reality for teams running AI agents in production. The same systems that generate incredible value also produce an overwhelming amount of observability data. Traditional approaches force you to become a database query expert, toggling between dashboards, writing complex filters, and manually correlating events across multiple sessions. By the time you find the root cause, hours have passed and the damage is done.

What if you could just ask?

That's why we're building an Maxmallow, an analytics chatbot for Maxim: a natural language interface that lets you have a conversation with your AI observability logs. Instead of crafting queries, you simply ask questions in plain English and get instant, intelligent answers.

The Problem: Too Much Data, Not Enough Insight

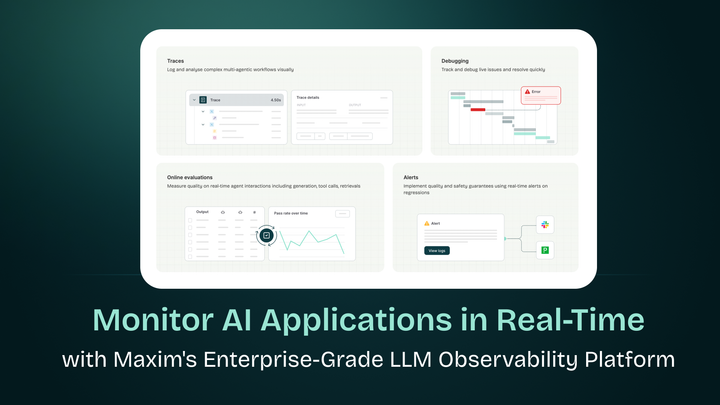

At Maxim, we help teams ship AI agents 5x faster through comprehensive evaluation and observability. Our platform captures everything: every LLM call, every tool invocation, every decision your agent makes, complete with latency metrics, token usage, and quality scores.

This visibility is powerful. It's also overwhelming.

When debugging agent behavior in production, teams face several challenges:

Needle in a haystack debugging: You need to find specific failure patterns across thousands of sessions. Was it a retrieval issue? A prompt problem? A tool execution failure? Answering these questions requires filtering through vast amounts of structured data.

Cross-session analysis: Understanding systemic issues means analyzing patterns across multiple user interactions, requiring complex aggregations and comparisons that are tedious to construct manually.

Time-sensitive investigations: Production incidents don't wait. When users report issues or metrics spike, you need answers immediately: not after spending 30 minutes writing SQL queries.

Context switching overhead: Moving between trace views, session lists, metric dashboards, and raw logs fragments your mental model and slows down investigation.

The fundamental issue is that traditional query interfaces (whether dashboards, filters, or SQL) create friction between your question and the answer. They force you to translate your debugging intent into the language of database queries rather than letting you focus on solving the actual problem.

The Solution: QA Over Observability Logs

Maxmallow transforms how you interact with Maxim's observability data. Instead of querying databases, you have a conversation:

"Show me all sessions where users gave negative feedback in the last 24 hours"

The chatbot instantly retrieves the relevant sessions, presenting them in an easily scannable format. But it doesn't stop there. You can follow up naturally:

"What were the common characteristics of these sessions?"

Now the chatbot analyzes the failure patterns, identifying that 80% involved international refund questions and that retrieval scores were consistently below 0.65 for the relevant documents.

"Compare this to sessions with positive feedback"

The chatbot surfaces the key differences: successful sessions had higher retrieval scores and different prompt variations.

This conversational flow mirrors how you actually debug: iteratively, following hunches, drilling deeper as patterns emerge. The chatbot handles all the data wrangling behind the scenes, executing complex queries, joining across traces and sessions, and presenting insights in plain language.

How It Works: Natural Language Meets Structured Data

Our analytics chatbot is built specifically for Maxim's observability schema. It understands:

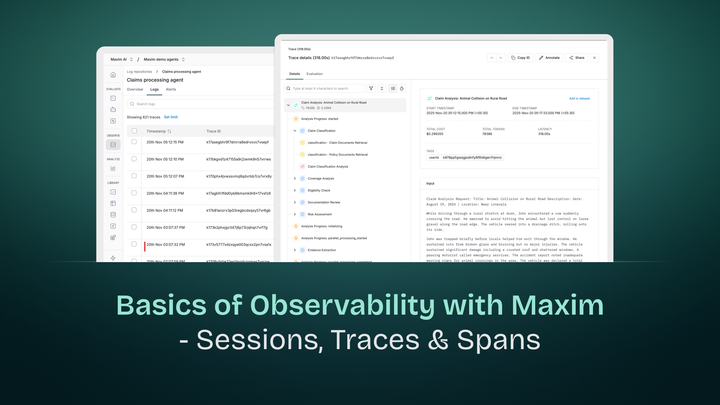

Agent execution patterns: Sessions, traces, spans, tool calls, and how they relate to each other in multi-turn agentic workflows

Performance metrics: Latency, token counts, costs, and quality scores at every level of granularity

Evaluation results: Automated and human evaluations, feedback scores, and quality thresholds

Temporal relationships: Time-based queries, trend analysis, and comparing behavior across different time windows

When you ask a question, the chatbot:

- Parses your intent using LLM-powered query understanding

- Translates to structured queries against Maxim's log repository

- Executes optimized lookups across distributed traces and session data

- Synthesizes results into natural language answers with supporting evidence

- Maintains context for follow-up questions and deeper investigation

The result feels like pair programming with an expert who has perfect knowledge of your entire production history.

Real-World Debugging Scenarios

Let's walk through how the analytics chatbot accelerates common debugging workflows:

Scenario 1: Investigating User Complaints

Without the chatbot: You receive a complaint that the support agent is "making things up" about refund policies. You open the dashboard, filter by date range, scan through hundreds of sessions, export to CSV, manually grep for "refund", examine individual traces, and eventually discover the pattern.

With the chatbot:

- You: "Find sessions where users mentioned refunds and gave low ratings"

- Chatbot: Returns 23 sessions with excerpts and ratings

- You: "What went wrong in these conversations?"

- Chatbot: "Analysis shows retrieval failures: international policy documents consistently scored below 0.65, while irrelevant domestic policies scored higher. The LLM generated responses from wrong context."

- You: "Show me the exact retrieval results for session conv_789"

- Chatbot: Displays full retrieval chain with scores

Time saved: Hours reduced to minutes.

Scenario 2: Performance Optimization

You: "Which tool calls have the highest latency this week?" Chatbot: "The search_knowledge_base tool averages 3.2s, 2x slower than other tools. 156 calls exceeded 5s." You: "Show me examples of slow searches" Chatbot: Presents trace details with query patterns

You: "What were users asking when this happened?" Chatbot: "85% involved complex multi-part questions requiring broad semantic search across 10,000+ documents."

Now you understand the issue: complex queries against a large corpus need optimization, perhaps through better indexing or query decomposition.

Scenario 3: Rollout Validation

You: "Compare session success rates between the new prompt version and the previous version" Chatbot: "New version (v2.1): 87% success rate across 450 sessions. Previous version (v2.0): 79% across 380 sessions. Improvement is statistically significant (p=0.003)." You: "Where did v2.1 improve most?" Chatbot: "Multi-step reasoning tasks improved by 23%. Tool selection accuracy increased from 81% to 94%."

Instant validation that your changes had the intended effect, with statistical confidence.

Why This Matters for Production AI

Running AI agents in production is fundamentally different from traditional software. Agents are non-deterministic, multi-modal, and operate across complex reasoning chains. A single user interaction might trigger dozens of LLM calls, retrieval operations, and tool executions.

This complexity makes traditional debugging approaches inadequate. You can't just check error logs: you need to understand decision pathways, inspect retrieval quality, analyze token usage patterns, and correlate behavior across dimensions that traditional logging systems weren't designed for.

The analytics chatbot bridges this gap by making sophisticated analysis accessible through natural language. It democratizes observability, allowing everyone on your team, from engineers to product managers to customer success, to understand what's happening in production.

More importantly, it accelerates the feedback loop. Faster debugging means faster iterations. Faster iterations mean better agents. Better agents mean happier users and more successful deployments.

Built on Maxim's Observability Foundation

The chatbot is only as good as the data it queries. That's why it's built on top of Maxim's comprehensive observability platform:

Distributed tracing: Every agent execution is captured as a hierarchical trace, with full context for every LLM call, retrieval step, and tool invocation

Session management: Multi-turn conversations are grouped as sessions, making it easy to understand end-to-end task execution

Automated evaluation: Continuous quality checks run on production traffic, providing real-time feedback on agent performance

Integration ecosystem: Works seamlessly with LangChain, LangGraph, OpenAI Agents, CrewAI, and all major AI frameworks

This foundation ensures that when you ask the chatbot a question, you're querying rich, structured observability data, not fragmented logs scattered across systems.

The Future of AI Observability

We believe conversational interfaces will become the primary way teams interact with observability data for AI systems. The complexity is simply too high for point-and-click dashboards to remain effective at scale.

Maxmallow represents the first step in this direction. Over time, we envision it becoming even more proactive:

- Automated anomaly explanation: "Your agent's success rate dropped 15% in the past hour. The issue is slow retrieval for queries containing technical jargon. Here's why..."

- Predictive insights: "Based on current trends, you'll exceed your token budget by 30% this month unless you optimize these three high-frequency queries"

- Suggested improvements: "Sessions failing on task X have a common pattern. Consider adding this example to your prompt or adjusting your retrieval parameters"

The goal is to shift from reactive debugging to proactive optimization, with the chatbot serving as your AI reliability co-pilot.

Getting Started

Maxmallow is currently in development at Maxim. If you're already using Maxim for AI observability and are interested in learning more about how it could help your team debug agents in production, reach out to us.

And if you're not yet using Maxim, sign up for our free tier to start instrumenting your AI agents with comprehensive observability. Because before you can ask questions about your data, you need to capture it properly.

Ready to move from drowning in logs to conversing with your data? The future of AI observability is conversational. Join us in building it.